Is Aschenbrenner's 165 page paper on AI the naivety of a 25 year old?

In uncertain times, everyone needs a safety team

Edit 3 [15 Jun]: I’m reading that US & OpenAI did take this seriously. I think the question might be settled (Leopold’s plead has been acknowledged) and I’ll take a walk, call my brother, and do a mix of procrastination and work. I hope Europe will take a look at the paper because it involves cooperation, competition and regulation. Good luck to Mistral in France, ASML in Netherlands, Europe is counting on you! As for Stability in London—I hope you recover.

Edit 2 [14 Jun]: Sabine Hossenfelder just posted an analysis with relatively good counter-points: Leopold is in an SF bubble and unless there are serious incentives his predictions are off by a magnitude for power and data reasons. I do not agree with her Minsky counter-example, because you could use a counter-example: Turing’s Enigma machine’s impact: computer power impacting/saving millions of lives, Slizard’s impact impacting hundreds of thousands of lives, etc.

Edit [13 Jun]: A person on Hacker News read this post and complained that I don’t offer a thoughtful analysis of the paper — to which I agree, which is why the title is a question. Luc suggested Zvi Mowshowitz, which is one of the places where I got the link and opportunity to start reading it. I suggest Aaronson’s analysis.

As a nerdy teen I hated neural networks from data science because I couldn’t train one to multiply two-digit numbers and had a friend who wanted to build a movie scene detector like Shazam did with songs, which I couldn’t do no matter how I tried — perceptual hashing + NNs — it was too early. I believe(d) that the natural human brain is a complex thing that will never, ever get approximated by a computer due to its sheer complexity:

hundreds of millions of years of development (neo-mammalian theory) compared to 1-2 centuries of computers

synapses have very complicated spiking patterns which no computer scientist or medical doctor really understands, apart from things like detecting seizures

scanning the brain is hard and most of glucose and EEG techniques are like putting a voltmeter on your laptop’s charging port and expecting to ‘measure’ what’s the code of the app running.

even one neurotransmitter like dopamine controls muscle movement, reward, lactation (!), expectations — and the brain has 60+ of them, in a complex loop.

every time a person tries to artificially make something similar using AI, people feel some interest and decide to fund research into it, but often a so-called AI winter comes — and there are two AI ‘winters already (1974–1980 and 1987–2000). During an AI winter, there’s a loss of interest and money in academia and business.

And I still don’t know how complex the brain is and whether neural networks can approximate it sufficiently enough. To this day, I am fascinated by scientists like Donald Knuth who work on non-AI data structures and algorithms.

However, in 2019 someone released AI Dungeon, a text game based on something called GPT-2 and released it on social media. I clicked on it and it asked me what to do and I typed go to Macedonia and drive south, to which I got a response that I’m now in Greece and an elderly woman Eleni was greeting me. It felt uncanny, especially since everyone in the Balkans knows the borders and common Balkan names. At that point I had a gut feeling that this would explode so I messaged a computer scientist professor and we talked about it. It wasn’t going to disappear and neural networks weren’t that bad. I don’t have the transcript but I found a segment of one Dungeon saved on my Gist profile, where I’m stuck in Luton and want to go to Skopje:

What I do know is that that I have my future self will regret it if I don’t post a link to a gut feeling for something new, a paper named Situational Awareness on my blog, whose author, an ex OpenAI employee Leopold Aschenbrenner) spent a lot of time writing it, and I like people who spend a lot of effort on pursuing things.

🔗 https://situational-awareness.ai/wp-content/uploads/2024/06/situationalawareness.pdf

Worst-case scenario: The paper is a nothing-burger, I get embarrassed by this post, and he is naive or paranoid about the timescale and impact.

Average case: Leopold’s work makes some bad and some good points about AI and its progress in the next century.

Best-case scenario: Leopold has important things to say about what he saw in OpenAI as a part of the Safety/Alignment teams (which… no longer exist?):

p….] The smartest people I have ever met—and they are the ones building this technology. Perhaps they will be an odd footnote in history, or perhaps they will go down in history like Szilard and Oppenheimer and Teller. If they are seeing the future even close to correctly, we are in for a wild ride.

He dedicates the paper to Ilya Sutskever, the co-author of AlexNet and a person that at one point became the CEO of OpenAI. And he wants the world to know that AI progress may cause a lot of success but problems as well — and governments should be careful.

I would love to be confident enough to be able to analyze it or say if it’s true or not, but I don’t feel that yet. My gut feeling says ‘yes’ — transformer-based models may have big implications, but I cannot back it up with a falsifiable statement. What I can analyze:

I fully agree with this: we’re running out of internet data. That could mean that, very soon, the naive approach to pretraining larger language models on more scraped data could start hitting serious bottlenecks.

It doesn’t take a genius to fully agree that the chart of expenditures of FAANG is correct

I have no idea about the hype of San Francisco and whether that changes the AI timelines: I haven’t been there yet and I don’t know if it’s such a competitive advantage compared to the rest of the world. Historically, it has, but it has it’s flaws too. What matters is the balance of compute vs data with a sprinkle of algorithmic ingenuity.

If you want a summary of the paper, journalists did that using GPT (GPT analyzing GPT 😂?). I will possibly try to dissect segments of those 160 pages a future post, but I really recommend these two Zvi posts, as well as the Patel podcast:

I would like to finish this post with two random facts:

Riemann’s Hypothesis, a 165-year-old unsolved math puzzle whose proof is worth $1M now has a piece cracked by two mathematicians: https://mathstodon.xyz/@tao/112557248794707738

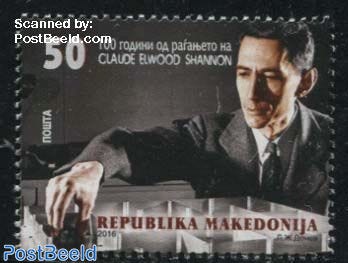

I learned that a designer from my country of origin proposed a mail stamp celebrating the birth of Claude Shannon (one of the key people who founded the basics of artificial intelligence):

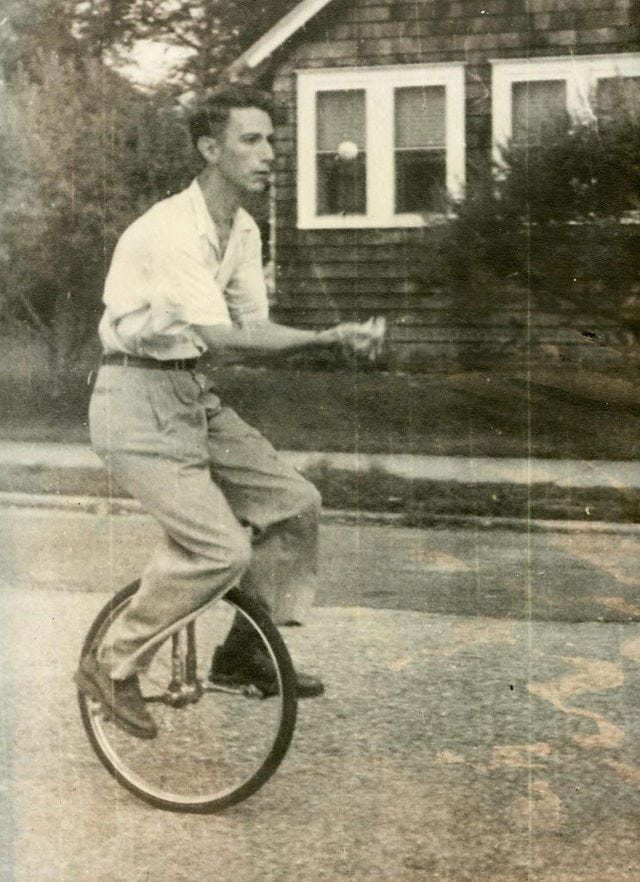

P.S. Shannon, although shy and quiet by nature (the only time he would get loud was if you challenged his opinions on intellectual matters at Bell Labs) was quite a funny guy:

I propose you (and those you link to) are overlooking (at least the importance of) one fact: the human mind is composed of both hardware and (many layers of) software.